Featured Speaker

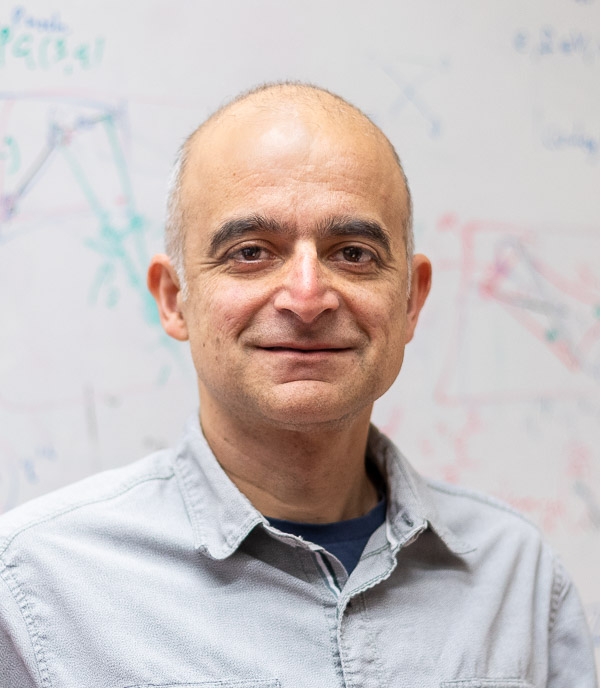

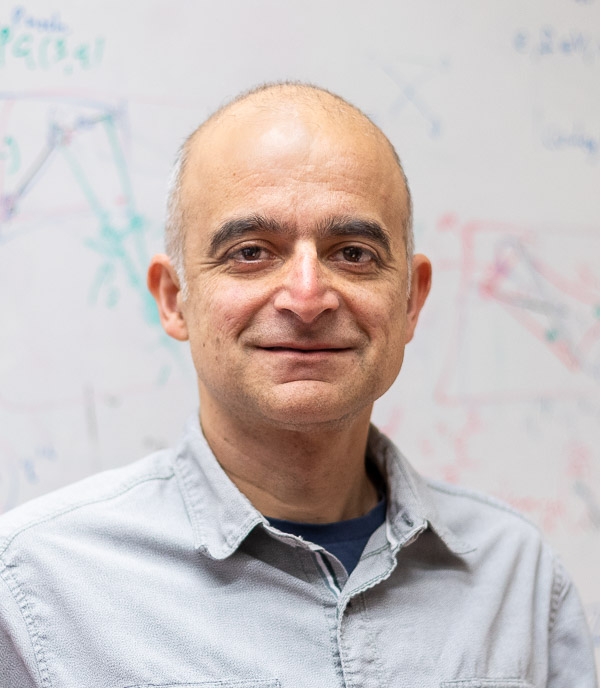

Buckingham Scholar: Dhruv Mubayi (University of Illinois Chicago)

"Randomness and determinism in Ramsey theory" and "Pentagons in triple systems with applications"

September 18-19, 2026.

We encourage contributed talks (on any topic) from both students and faculty. You can submit a talk on the registration form.

Organizing committee

Buckingham Scholar: Dhruv Mubayi (University of Illinois Chicago)

"Randomness and determinism in Ramsey theory" and "Pentagons in triple systems with applications"

Bohn Lecturer: József Balogh (University of Illinois Urbana-Champaign)

"Ramsey-Tur\'an type of Theorems in extremal graph theory" and "Clique covers and decompositions of cliques of graphs"

The Welcome Session will begin Friday September 18. Signs will be posted inside the building directing you to the Registration area where you can pick up your registration packet, which will include full programs with abstracts, meal tickets, and registration receipts. You will need your name tag, included in your registration packet, if you are attending any of the meals. Please see Parking Options below for information about visitor parking. Without a parking pass, you risk receiving a parking ticket.

You can register here. (Registration Deadline: Sept. 12, 2026)

You can pay for registration in advance before arriving at the Annual Math Conference.

Note: Miami University is a cashless campus. Please be prepared to use a debit or credit card for registration and meal fees at the conference.

Please book rooms as soon as possible as hotels frequently sell out long before the conference! Reservations must be made directly with the hotel via phone call, or booking link. Rates are per night and do not include taxes. Individual establishments have determined their minimum rates due to the strong demand for lodging during the fall season.

Marcum Hotel and Conference Center

Marcum Hotel and Conference CenterOxford, OH (Located on Miami's Oxford Campus)

513-529-6911

Group code: Math Conference

Cut-off date: 9/11/2024

Additional Area Hotels and Inns

A permit will be required to park in any Miami parking lot that is not a Pay-To-Park (metered) lot. Your license plate will serve as your permit, and a link will be available closer to conference time so that you can register your vehicle. Your vehicle must be registered to park in a lot, otherwise, you will need to park in a garage or metered lot. To familiarize yourself with parking policies and the various parking areas, visit the Miami Parking Website and click on the ‘Where do I park?’ block. The closest parking to our new location will be the Cook Field area.

Please see the map of all parking areas on campus.

We also have on-campus parking garage options, the North Garage and the South Garage. You will need to pay per hour to park in the garage. Hourly rates for the North Garage fees are $2.00 for the first hour, and $1.00 for each additional hour. The hourly rate for the South Garage is $1.00 for the first hour, and $0.50 for each additional hour.

Please plan accordingly, as cash is not accepted at parking garages, parking meters, etc.

Please bring the ticket to the registration desk, or you can mail it to Miami University; Department of Mathematics; 204 Upham Hall; Oxford, OH 45056. The Math Department will then contact the Parking Office to request that the ticket be voided. We can not guarantee your ticket will be voided.

Miami's Annual Mathematics Conference is long-standing, first held in 1974.

| Year | Conference Theme | Buckingham Scholar | Bohn Lecturer |

|---|---|---|---|

|

1974 |

Geometry |

||

|

1975 S |

Statistics |

||

|

1975 F |

200 Years of Mathematics in America |

||

|

1976 |

Recreational Mathematics |

||

|

1977 |

Number Theory |

||

|

1978 |

Applied Mathematics |

||

|

1979 |

Geometry |

||

|

1980 |

Statistics |

||

|

1981 |

Emerging Trends in Math |

||

|

1982 |

Math and Computing |

||

|

1983 |

Operations Research |

Bill Lucas |

|

|

1984 |

Mathematics Education |

||

|

1985 |

Statistics |

||

|

1986 |

Discrete Mathematics |

||

|

1987 |

Computers and Mathematics |

||

|

1988 |

Mathematical Recreations |

||

|

1989 |

Issues in Teaching Calculus |

||

|

1990 |

Linear Algebra and its Applications |

||

|

1991 |

Statistics and its Applications |

||

|

1992 |

History of Mathematics |

||

|

1993 |

The Teaching and Learning of Undergraduate Mathematics |

Judah Schwartz |

Karen Geuther Graham |

|

1994 |

Analysis and General Topology in the Undergraduate Curriculum |

Grahame Bennett |

|

|

1995 |

Mathematical Modeling |

Philip Straffin |

|

|

1996 |

Statistics |

David Moore |

Ray Myers |

|

1997 |

Mathematics at Work |

Terry Herdman |

Andrew Sterrett |

|

1998 |

Mathematics Classroom Demonstrations |

||

|

1999 |

Experimental Mathematics |

||

|

2000 |

Mathematical Pictures That Are Worth a Thousand Words |

George Francis |

|

|

2001 |

Statistics in Sports |

Hal Stern |

|

|

2002 |

History of Mathematics in America |

David Zitarelli |

|

|

2003 |

Discrete Mathematics and Its Applications |

Robin Thomas | |

|

2004 |

Mathematics and Symmetry |

||

|

2005 |

Mathematics and Biology |

||

|

2006 |

Understanding Biological and Medical Systems Using Statistics |

Louise Ryan |

Eric Smith |

|

2007 |

Number Theory |

Avner Ash |

|

|

2008 |

Recreational Mathematics |

||

|

2009 |

The Teaching of Undergraduate Mathematics |

J. Michael Shaughnessy (Note: Unable to attend. Kichoon Yang presented one talk instead.) |

|

|

2010 |

Analysis in the Undergraduate Curriculum |

Neal Carothers |

|

|

2011 |

Mathematics of Finance |

Victor Goodman |

|

|

2012 |

Statistics in Sports |

Jim Albert |

Mark Glickman |

|

2013 |

Undergraduate Research in Mathematics |

Dennis Davenport |

|

|

2014 |

Optimization |

Adam B. Levy |

Dan Kalman |

|

2015 |

Combinatorics |

Jacques Verstraete |

|

|

2016 |

Differential Equations and Dynamical Systems |

Chris Jones |

Stephen Schecter |

|

2017 |

Algebra and Connections to Geometry |

Srikanth Iyengar |

Chelsea Walton |

|

2018 |

Making Mathematics Visual |

George Hart |

Steve Phelps |

|

2019 |

Differential Equations and |

||

|

2020/2021 |

Canceled |

|

|

|

2022 |

History of Mathematics |

David Richeson | |

|

2023 |

Differential Equations and Dynamical Systems and their Applications |

Todd Kapitula |

Janet Best |

|

2024 |

Mathematical Foundations of Machine Learning |

Lek-Heng Lim | |

|

2025 |

Mathematical Foundations of Cybersecurity |

Lawrence C. Washington |

Bruce Kapron |

1. The Buckingham Scholar-in-Residence was established in 1976. These speakers could have been so designated had the title existed at the time of their invited address.

2. The Bohn Lecture was initiated in 1992. These speakers could have been so designated had the title existed at the time of their invited address.